The Case For AI Security Tax Incentives & How They Could Work

Americans for Responsible Innovation recently released a proposal to institute a tax incentive for research on AI security and responsible AI development. We developed this proposal for Congress to consider as part of the upcoming budget reconciliation package. Here, we provide a high-level explanation for why a tax incentive for research on AI security and responsible AI development may be a good idea, and how our proposal could work.

Why a tax incentive for AI security and responsible AI development?

AI is increasingly becoming integrated into the economy and embedded in our society. At the same time, our national security and public wellbeing may increasingly depend on our ability to fully harness the benefits of AI while also mitigating the risks it can present. In order for us to achieve this, it is critical to boost research on AI security and responsible AI development by leading developers – who possess the financial means, technical expertise, and most access to the cutting-edge of this technology.

A method to recalibrate financial incentives

AI developers currently allocate substantially more capital toward producing increasingly capable and commercially attractive AIs than toward research on AI security and responsible AI development. This AI investment differential between capabilities development on the one hand and security and responsibility research on the other reflects a zero-sum framing in which investing in such research represents a positive economic externality. It is of benefit to the public interest, but it is not necessarily of direct financial benefit to developers themselves. It may also be the case that developers believe investing in security and responsibility research actually impedes their ability to bring products to market, that it ultimately obstructs technological and economic competitiveness.

Key developments at OpenAI illustrate this challenge. In 2023, OpenAI announced allocating 20% of its compute budget toward a new superalignment team dedicated to AI safety research on severe risks from AI. Less than a year later in 2024, this superalignment team was disbanded and its co-leads resigned.1 Separately, in 2024 OpenAI’s Preparedness Team reportedly rushed safety evaluations for their flagship GPT-4o model’s launch to maintain market advantage over competitors. A post-launch internal analysis found GPT-4o actually exceeded OpenAI’s internal standards for harmful persuasion.

These incidents at OpenAI demonstrate how commercialization pressures can create and deepen a trade-off dynamic between rapid productization of frontier models and security and responsibility research. A tax incentive scheme of the kind we propose could help recalibrate this AI investment differential by presenting substantial financial incentives that also reduce the net cost of security and responsibility research. In economic terms, the proposed tax incentive would help developers internalize the positive externalities of security and responsibility research, thereby integrating societal gains directly into their financial motivations.

A path to (properly) align AI development & national security

Beyond the economic dynamics of AI security and responsibility research, broader geopolitical and national security considerations likely exacerbate the AI investment differential.

For example, consider the Entente strategy – the notion of an international democratic coalition collaboratively controlling frontier AI technology while excluding authoritarian adversaries. This strategy has gained significant traction among U.S. AI developers, national security experts, and the U.S. Executive Branch. To be sure, the Entente strategy may be critical for maintaining U.S. leadership in AI. However, the national security perspective upon which it rests demands maintaining perpetual technological superiority over our geopolitical adversaries. This imperative can amplify the AI investment differential by creating strategic pressures to lead in AI capabilities beyond those already generated by economic incentives. In doing so, the imperative risks overshadowing the critical importance of concurrently advancing security and responsibility research.

The paradox is that as AI systems increasingly develop powerful capabilities, security and responsibility research becomes more and more vital to national security. For instance, the closer leading AI developers come to achieving artificial general intelligence (AGI), the more important it becomes that we be able to control such powerful AI and to mitigate whatever risks it may present.

A tax incentive scheme of the kind we propose could help address this national security paradox. By helping to recalibrate the AI investment differential through targeted incentives to boost security and responsibility research, the proposal aims to promote discovery of control and risk mitigation solutions for increasingly powerful AI.

How would our proposal work?

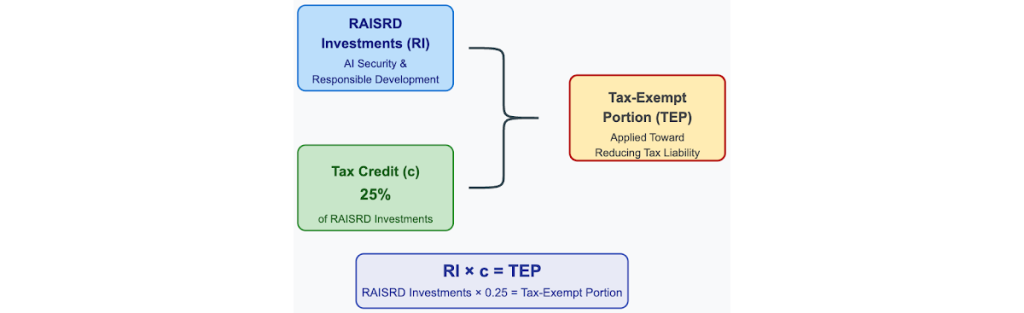

We propose implementing a voluntary tax incentive structure that rewards sustained investment in research on AI security and responsible AI development (RAISRD). The incentive would consist in a 25% tax credit for developers of frontier models with security and responsibility research programs. The credit would be calculated from RAISRD qualifying investments (explained below) and then applied toward reducing an AI developer’s tax liability, for example by making a portion of an AI developer’s income before taxes tax-exempt.2

To illustrate, imagine a fictional case of an AI developer called Matrix, with an income before taxes in 2024 of USD $1 billion and RAISRD qualifying investments in 2024 of USD $40 million. Applying a tax credit rate of 25% to Matrix’s RAISRD qualifying investments gives USD $10 million – the tax-exempt portion which Matrix may use to reduce tax liability on their 2024 income before taxes.

Below, we present an interactive visualization of our tax credit formula. Its variables can be adjusted to represent many different permutations of our tax incentive scheme.

Tax Credit Qualifications

We outline several requirements in our proposal that a developer must meet in order to claim the tax credit.

RAISRD qualifying research areas

Our proposal takes an expansive approach to what research may qualify for the tax incentive, listing eight key RAISRD areas:

- AI Security: Development of protections against adversarial attacks and unauthorized access.

- AI Alignment: Approaches to ensuring AI systems align with human values.

- Transparency: Advancement of interpretability and explainability measures.

- AI Governance: Development of oversight frameworks and risk management practices.

- Data Privacy: Development of privacy-preserving methodologies.

- AI Safety: Investigation of robustness and fail-safe mechanisms.

- Fairness: Methods for ensuring that all users enjoy comparable benefits.

- Human-AI Collaboration: Research on effective human-AI interaction paradigms.

Maintenance of effort

A developer’s RAISRD qualifying investments must exceed their previous year’s baseline spending on RAISRD qualifying research areas. This maintenance of effort requirement ensures that the tax credit incentivizes new RAISRD qualifying investments rather than subsidizing existing investments.

Research publications

A developer claiming the tax credit must publish research findings accruing from RAISRD qualifying investments in order to benefit the broader AI community. This ensures one concrete way by which the tax incentive scheme serves the public good. It also provides a straightforward means for third-party assessment of whether the tax incentive scheme is promoting meaningful security and responsibility research. Our proposal includes a provision that published research findings may be subject to appropriate security considerations and redaction protocols as necessary. Additionally, the research publication requirement must meet certain standards (to be detailed by the implementation and enforcement authorities) in order to claim the tax credit.

Preparedness Framework

A developer claiming the tax credit must maintain and update a public Preparedness Framework (PF). In a nutshell, a PF helps the public understand (a) how a developer plans to evaluate their AI systems as they develop powerful capabilities (potentially reaching certain dangerous capability thresholds), and also (b) how a developer aims to maintain important security measures as model capabilities scale. Among major U.S. AI developers with expressed interest in developing powerful AI such as AGI, OpenAI, Anthropic and Google have public-facing functional PFs, and they may be gaining traction as a widespread industry best practice.

Within our proposal, PFs sufficient for claiming the tax credit would need to include certain core elements, including: (1) Security protocols; (2) Risk assessment frameworks; (3) IF-THEN statements of what actions would be triggered if dangerous capability thresholds are crossed; (4) Transparency measures; (5) Ethical development commitments; (6) Human oversight mechanisms; (7) Incident response procedures; and (8) Strategies to ensure fair treatment of users.

Implementation

The Treasury Department and Internal Revenue Service (IRS) would administer the credit and enforce the conditions to claim it. Furthermore, Treasury and IRS would be able to consult with the National Institute of Standards and Technology, the Federal Trade Commission, and other relevant agencies in setting definitions, eligibility requirements, and standards for fulfilling the research publication requirement.

In summary

Our proposal to implement a tax incentive for research on AI security and responsible AI development aims to recalibrate financial incentives and promote national security. By incentivizing security and responsibility research and reducing its associated net costs, we can help ensure that advances in AI capabilities are matched by corresponding advances in security and responsibility measures. In this way, the potential of AI can be realized while maintaining strong security standards and protecting the public from harm.

1 One of the co-leads, Jan Leike, stated in a resignation post, “Sometimes we were struggling for compute and it was getting harder and harder to get this crucial research done […] safety culture and processes [at OpenAI] have taken a backseat to shiny products.”

2 For the case of developers operating at a loss, the tax credit could be configured, for example, as refundable, transferable, or tradeable.